In scenarios involving driver-car interaction, effective communication is vital for maintaining safety and operational efficiency. Conventional communication methods, like voice commands and manual inputs, can be impractical and unsafe, especially in noisy or dynamic environments. Lipreading offers a compelling alternative by utilizing visual cues from lip movements and facial expressions. However, existing lipreading techniques typically depend on RGB cameras, which can struggle with the variable lighting conditions found in vehicle interiors, making near-infrared imaging a more suitable option. The scarcity of near-infrared data presents challenges, as merely fine-tuning RGB models is inadequate. To overcome this limitation, we propose a knowledge distillation approach that transfers features from pre-trained RGB models to near-infrared models, thereby enhancing performance with the limited available near-infrared data. Additionally, we introduce LR-CAR, the first dual-modality dataset for driver-car interaction which includes both RGB and near-infrared modalities. Our results indicate that this method significantly boosts lipreading performance, achieving an impressive 26.51% improvement over basic fine-tuning.

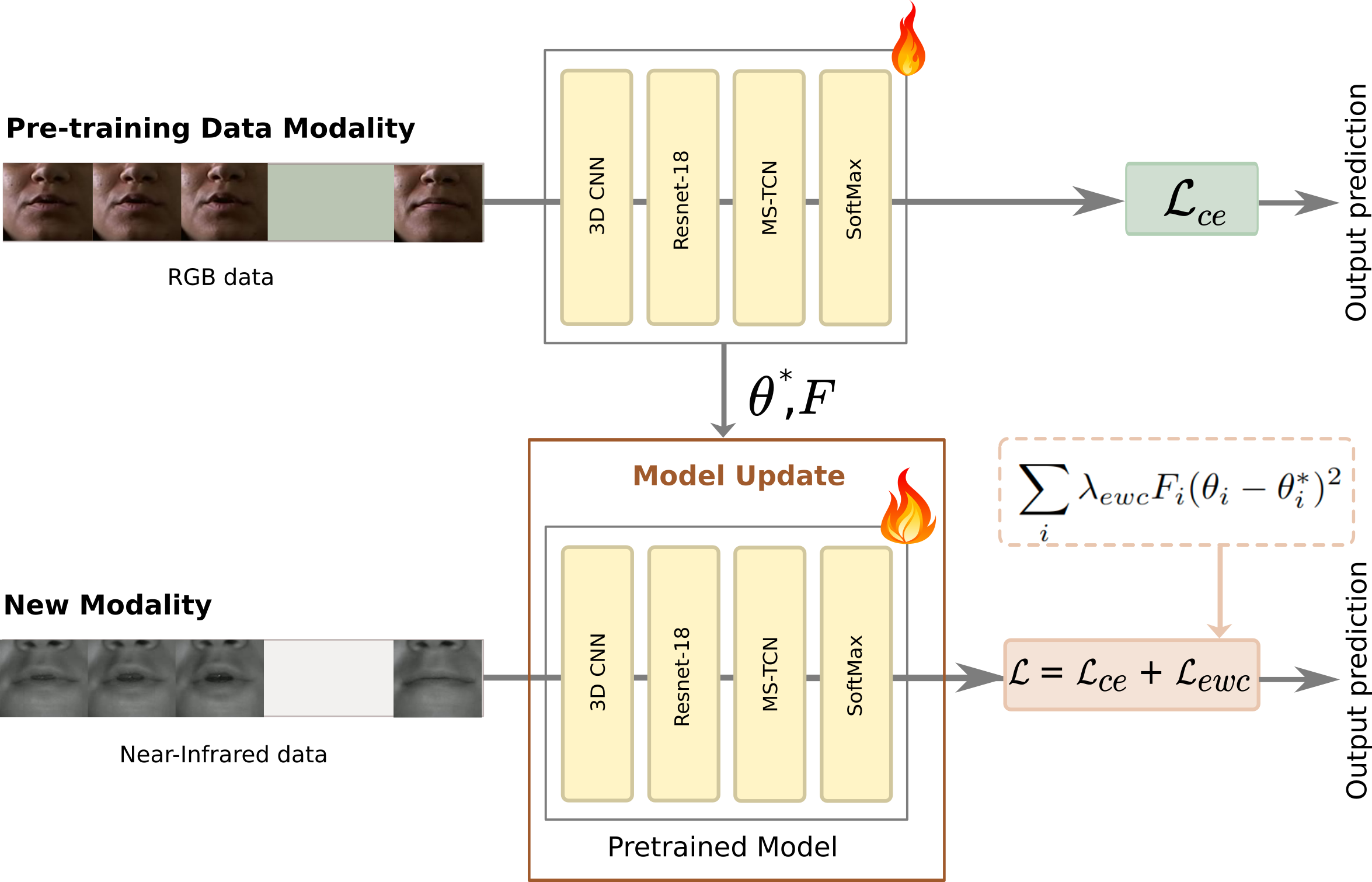

Overview of the EWC method across two training phases: In Phase 1, the model is trained on RGB data. Key parameters are identified using the Fisher Information matrix. In Phase 2, the model is trained on near-infrared data. A regularization term is applied to protect critical parameters from Phase 1. This approach allows the model to retain RGB knowledge while adapting to near-infrared input.

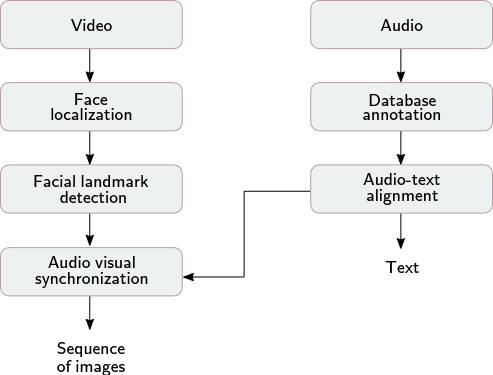

Data processing was done via an automated pipeline:

LR-CAR dataset was recorded from two different cameras in the same time: RGB and Near-Infrared camera.

29 speakers were involved to obtain a global number of 1044 utterances.

Each speaker repeated 12 representative car commands three times.

| ID | Commands | ID | Commands |

|---|---|---|---|

| 1 | Time to arrival | 7 | I need a break |

| 2 | Cooler | 8 | Take me home |

| 3 | Warmer | 9 | Hello |

| 4 | Mute | 10 | Take me to work |

| 5 | Weather forecast | 11 | Accept call |

| 6 | I feel fine | 12 | Reject call |